The same human who helped create the AI had only one task at this moment, move the stone to its place on the board as the AI instructed. The move would seem to be a bad move, except later, when it appeared the AI was playing in a way we humans could learn from. This is a scene from AlphaGo – The Movie, celebrating the humans that created such a powerful artificial intelligence/AI at the same time we feel for the plight of the human opponent. Programming an AI to play Go presents a problem that also occurs in launch and space systems design, more so as we stray far from what’s known.

Programming an AI to play Go presents a problem that also occurs in launch and space systems design, more so as we stray far from what’s known.

“The professional commentators almost unanimously said that not a single human player would have chosen move 37.”

AlphaGo – The Movie

I’ve written on reusability as key to sustainability and the fact that there are so many design and technology decisions. On top of “what” design decisions to make, say legs and fins vs. wings and things, or a middle path, with even more decisions past those forks in the road, there are more decisions about “how” to organize people to get it all done. There is product and there is process. Human intuition, ingenuity, and bursts of inspiration are irreplaceable, yet as our problems get more complex, could we use those same powers to create a helper?

Go has 10 to the power of 170 possible board combinations (more than chess). Back in 2013, finding that even a simple model for a reusable launch vehicle design created a vast number of possible combinations, I dived into genetic algorithms (a low kind of AI). The design model I created contains only 97 inputs with 2 to 5 choices each (314 unique selections). In NASA-speak, we call this a “simple” model – regardless of how many years of work went into it! Even so, this model’s total number of unique design input sets reaches 10 to the power of 47. Not quite Go, but in the neighborhood.

Checking every combination to find the best design would be impossible and irrelevant, as how these unique design choices combine is complex. It’s the same as in life, a choice may be great for you here and now, not so much in the future, or vice versa, and to boot, it’s all limited at the end by a practical matter – your available funds. But as AlphaGo showed, winning the game can be about winning just enough. Completely routing the enemy is simply wasteful.

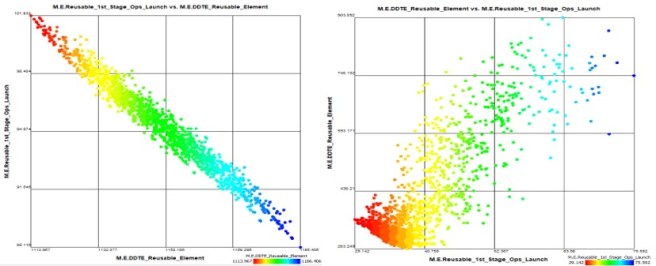

The first thing the AI does is re-orient your reality

You may have told the AI the flavor of each little decision. Then it’s the AI’s turn to tell you what it all means when put together – and it may be a surprise, like move 37. Typically, in a simple view, it’s pay me now or pay me later, as seen on the graph below (left). I *must* spend more to get a future benefit – right, always? In practice, it’s much more complex, as what we want is affected by how we do it. There, we locate counter-intuitive design and organizational combinations to spend less up-front relative to another path yet still reduce future costs – as in the graph below (right).

Of course, moving away from engineering circles, the result on the right is not counter-intuitive at all. NASA discovered this when it looked at how its models failed so miserably in predicting Falcon 9 costs. We try, learn, and find that sometimes you can have it all. You can spend less, much less even, yet get more – all by turning the knob dramatically on “how” to get “what” you want.

Such an AI approach does not make Starship RUDs any less required. Experimenting leads to learning. And there is no replacement for experimenting with different kinds of real space systems. In the real world, attendance is mandatory. Yet, as we learn, how does each player decide the next moves in this game? Human intuition, creativity, and inspiration will never be replaced. But, perhaps one day, the players designing reusable launchers, asteroid miners, and refueling stages will be just as helped by the AI they created to take all the possibilities and make move 37.

Also see:

- AlphaGo – The Movie (free on YouTube)

5 thoughts on “I’m with the AI, and I’m here to help”